A day and a half ago I was evaluating budgeting apps. I had a spreadsheet method I'd been using for years that actually worked. The problem was the spreadsheet itself: a manual reset every month, no clean way to bring my wife into it (the budget logic was baked into the layout, and building a real UI on top of a spreadsheet wasn't worth the effort), and after a few years of history it had grown unwieldy enough that I couldn't easily run queries against my own data. So I went looking.

YNAB wanted me to adopt envelopes. Monarch wanted me connected to my bank accounts. Copilot wanted a subscription. The open source self-hosted options wanted me to learn their data model. Every option I evaluated wanted me to abandon the method I had — a method that worked — in exchange for their interpretation of how budgeting should feel.

At some point I just asked Claude: is it crazy to just build my own?

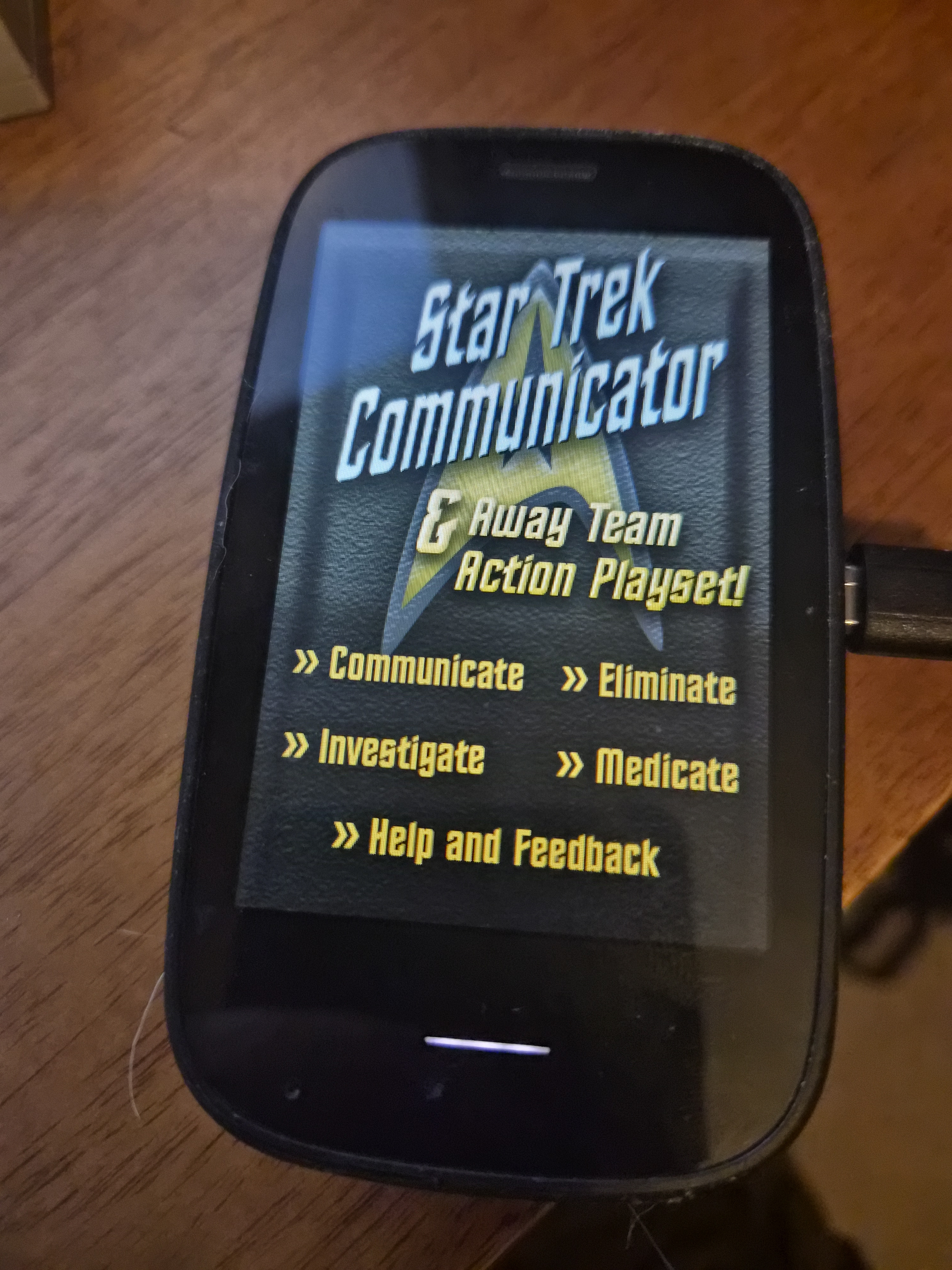

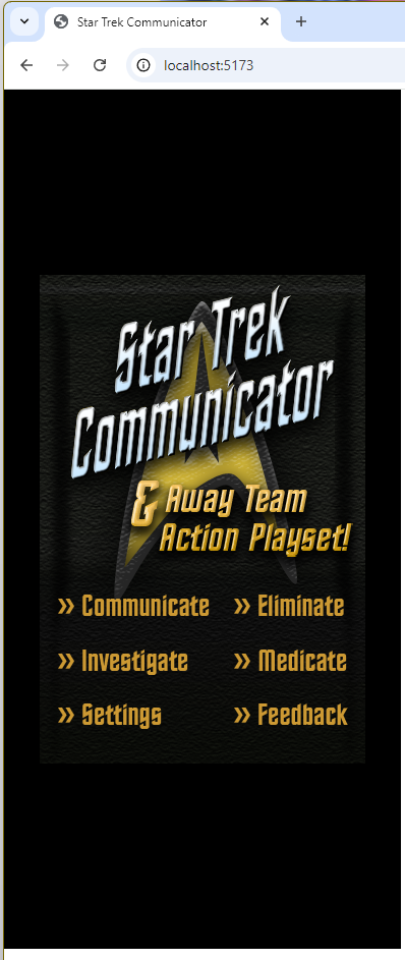

A day and a half later I had it. Built around my spreadsheet logic. Not connected to any bank. Not hosted anywhere external. Used every day.

That question — is it crazy to just build my own — is the article. Five years ago, yes. It was crazy. Today, in a growing number of cases, it isn't anymore. And the gap between those two answers is doing more to reshape software than most people are paying attention to.

The 80s called and they were budgeting

Personal finance software is not a new genre. It's one of the original genres.

Managing Your Money, by Andrew Tobias, shipped in 1984. Dollars and Sense before it. Quicken at the tail end of the decade. The software industry then was a much more distributed scene than today — dozens of small publishers shipping diskettes by mail, most of them now footnotes. The names that dominate tech now weren't yet the names that dominated tech. And before any of those apps, people were just building their own budgets in VisiCalc and Lotus 1-2-3, because that's what you did with a home computer. When the question came up — why would I buy one of these things? — balance your checkbook was right up there with play games as a top answer.

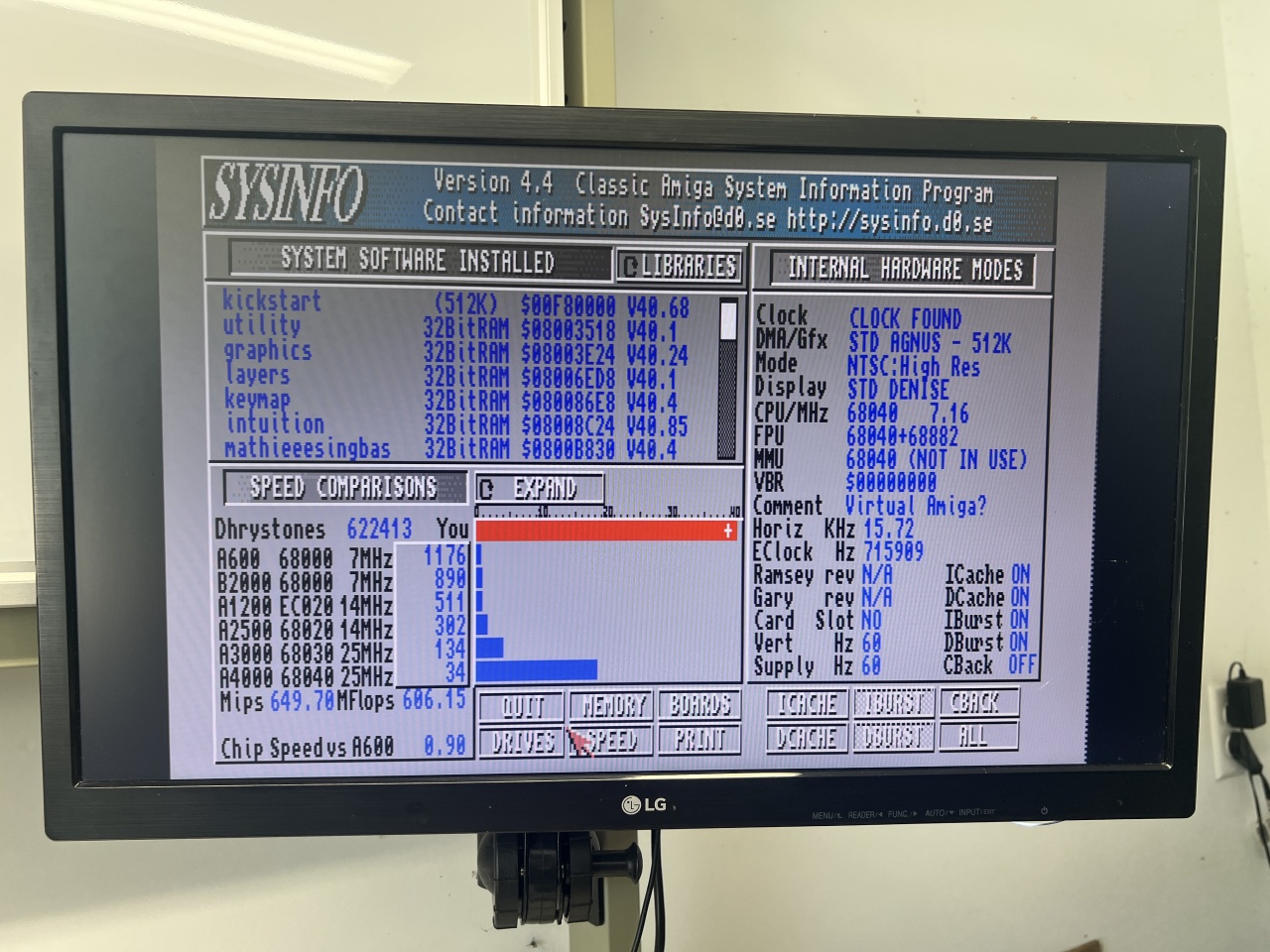

The 80s and early 90s home computer era was, in significant part, about personal software. You typed BASIC programs from magazines. You modified them. You wrote small utilities for your own life. The software you ran fit your life because you'd built it yourself, or you'd at least chosen it from a small enough pool that you knew which one matched your situation.

The decades since trained us out of that. Not by accident. Building software got increasingly punishing for individuals while consuming it got increasingly easy. SaaS won the consumer software war fair and square: cloud sync, mobile apps, multi-device, automatic updates, infrastructure you didn't have to babysit. In exchange you adopted whatever worldview the software embodied, because building your own version was a nights-and-weekends project measured in years.

That was a reasonable trade for a long time. For a lot of software it still is.

SaaS is not dead

There's a strain of AI hype right now insisting that vibe coding kills SaaS. It doesn't. I run a SaaS company. I'm not announcing my own funeral.

What actually happens is more interesting. SaaS retreats to the layers where it always belonged, and the personal layer comes back to the user.

SaaS is the right shape for multi-user coordination — anything with shared state across an organization, or a network effect, or a methodology that has to be consistent across everyone using it. No one is vibe coding a resident rewards platform that integrates with property management systems and handles payments. The whole point is that everyone in the network uses the same one.

SaaS is the right shape for compliance, security, and infrastructure burden. SOC 2, PCI, uptime guarantees, audit trails — the unglamorous load-bearing work my company spends real money on. Personal software gets to skip all of that precisely because it's personal. One user. No bank connection. No external hosting. That's a feature of personal software, but it's also why it doesn't scale to anything with real stakes for other people.

SaaS is the right shape for methodology-as-product. YNAB users love YNAB because the envelope method works for them and they want the software to enforce it. Someone adopting a new framework should absolutely buy the SaaS — the worldview is the value. Build-your-own only makes sense when you already have a method that works and the software is the friction, not the framework.

And SaaS is the right shape for infrastructure problems at scale. Stripe, Plaid, Twilio, the cloud providers themselves. No one is rebuilding payments. That layer is more entrenched than ever, and should be.

So this isn't a SaaS-is-dead piece. It's a more boring claim: SaaS expanded into the personal layer over the last twenty years because building software was too expensive for individuals, not because SaaS was the right shape for personal software. The fit was always a little strained — you've felt it every time an app told you to change how you think about something so it could help you. Now the economics correct. The personal layer goes back to the user.

Two flavors of personal software

The budget app was the cleanest version of this. Built the whole thing. The methodology was the personal part — my spreadsheet logic, my categories, my way of thinking about cash flow. No commercial option could capture that, because none of them are trying to. They're trying to sell me theirs.

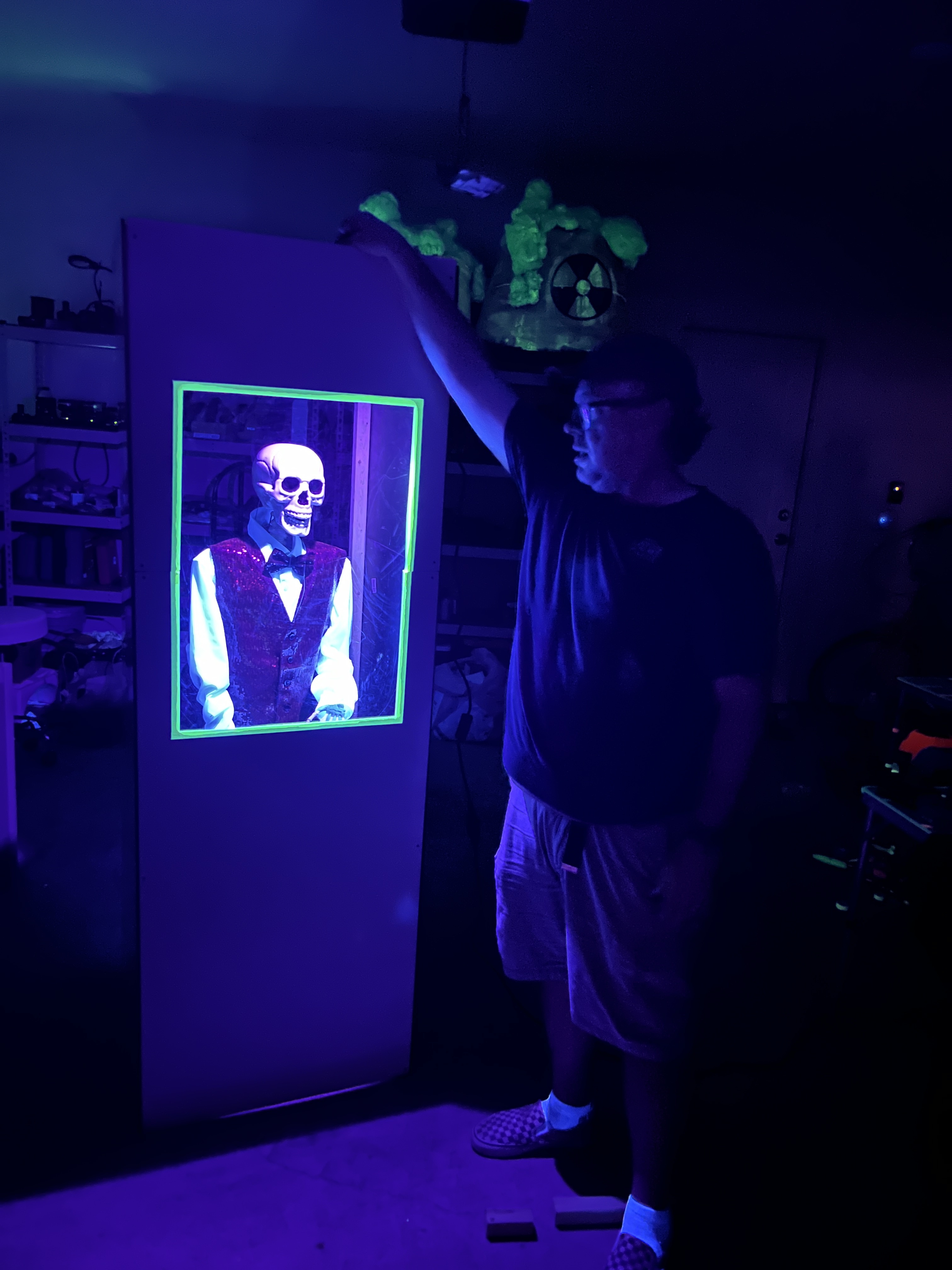

Simple Chores is the other flavor, and it makes a more interesting point.

We wanted a chore tracker that fit our household — me, my wife, eventually our daughter. Something utilitarian. Just remind us what needs doing. Every option I looked at either ran on the assumption that families need gamification, scoring, leaderboards, dollar bounties for taking out the trash — or was so complex it required a separate mental model just to set up. The Home Assistant community options were either too bloated or built around scheduling logic I didn't want. None of them were really for the way we live. Chores aren't a competition in our house. They're not a market. They're the things we do to keep our home running and to function as a family. I called the app Simple Chores. The manifesto is in the name.

I didn't build the whole thing. I built it as a Home Assistant app, leveraging HA's room model, its people model, its auth. Home Assistant had already done the household-modeling work better than I'd ever want to redo. What I built on top was the part that was actually personal: how chores get assigned, what counts as done, what the interface feels like when one of us walks into the kitchen. And — being honest — partly because it sounded fun to build something I had no clue how to build.

These are two different build/buy decisions at two different layers. The budget app needed to own its full stack because the methodology was the whole point. Simple Chores stood on top of an existing platform because the platform was already exactly right for the layer underneath. Both are personal software. Both fit my life because I made them fit. Neither would have been a reasonable use of my time five years ago.

The interesting practice is not write everything from scratch. It's architect which layer is yours. Where does my mental model need to be load-bearing, and where can I gratefully use someone else's work?

The sameness is the feature

One common complaint about AI-assisted coding right now is that the UIs all look the same. Same fonts. Same gradients. Same component patterns. People treat this as evidence that something is missing — that the work is generic, that the soul is gone.

I think the opposite. The sameness is the feature.

In the BASIC and CP/M and DOS days, your UI was the default character set the machine handed you. Box-drawing characters. Inverse video. A handful of colors if you were lucky. You didn't build a visual identity, because building a visual identity wasn't on the table. You built the logic. The thing that did the thing.

That was a constraint, and it was generative. It pushed your design energy into the part of the software that was actually personal — the model, the methodology, the way it thought about your data. The visual shell was given, and the given-ness was a relief.

The AI sameness is the same gift. Personal software doesn't need a visual identity. It's not competing for attention in an app store, not trying to convey brand values, not getting reviewed on The Verge. It's a thing one person uses to do a thing. Letting the AI hand you a clean, competent default UI means you can spend all your attention on what's actually personal: the methodology, the categories, the way the thing fits your head.

The default floor has risen. Everything looks reasonably designed out of the box. For commercial software that's a problem — you need to differentiate. For personal software it's exactly right. The shell is given, so you can focus on what's yours.

The sophomore slump parallel

This is also a film school thought, so bear with me.

A first feature is often tight, weird, specific. Made on constraints — money, time, a single location, the actors you could get. The constraints aren't disciplinary. They're generative. They force decisions. They give the work a shape.

The follow-up gets ten times the budget and falls apart. It has to serve every audience the first one earned. It has to justify the investment. It loses the constraints that made the original work.

Commercial software is structurally a sophomore project. It has to serve every user, every methodology, every edge case, every potential paying customer, every accessibility requirement, every enterprise procurement checklist. That's not a criticism — that's the deal. But it explains why the experience of using commercial software so often feels bloated. It is.

Personal software stays in debut mode. Scoped, weird, specific. Mine has exactly the features I use, in exactly the arrangement that fits my head. That's not because I'm a better designer than the YNAB team. It's because I have one user.

The part nobody talks about

There's one more thing I want to surface, because the productivity framing has eaten the entire AI discourse and it's making us miss something.

I built Simple Chores partly because it was fun. I'd never done that kind of project before. I had no idea what I was doing with Home Assistant's app framework. It was an evening hobby. Same energy as typing BASIC programs out of a magazine to see what they did.

That kind of building — speculative, unprofitable, just-to-see — was a huge part of why the home computer era mattered to people who lived through it. It wasn't all productivity. A lot of it was play. You built a thing because you wanted to know if you could.

AI-assisted coding has brought that back, and almost no one is talking about it because the discourse is locked into ten-engineers-replaced-by-one framing. Some of the best output from this technology, in my own life, has nothing to do with productivity. It's the return of personal software, yes. But it's also the return of personal programming — the practice, the play, the tinkering. The part where you build something for yourself just to see what happens.

That part is harder to put in a deck. But it's real, and if you've felt it, you know.

So, is it crazy to just build my own?

For most things, still yes. Build your own payments processor: crazy. Build your own CRM: still crazy. Build your own collaboration tool for a team of fifty: crazy. SaaS continues to do exactly what SaaS is good at, and the AI hype declaring otherwise will look as silly in three years as the no-code hype did.

But for the personal layer — the budget that has to fit your method, the chore tracker that has to fit your household, the dozen little utilities that fit your life and only your life — the answer has quietly flipped.

A day and a half. Spreadsheet logic, my categories, my interface. Used every day.

It's not crazy anymore. That's the story.